AI didn’t wait for your security review. While your organization was still debating policies, developers had already adopted coding assistants and product managers started drafting specs and UX with AI agents. That rapid adoption has created a growing gap between what teams are doing and what security can govern.

In this post you will learn why blocking AI tools backfires, what real-world security incidents reveal about the risks of ungoverned AI adoption, and how to treat AI as a first-class platform capability using centralized guardrails that keeps teams both fast and secure.

The Problem Runs Deeper Than You Think

The challenge is not just that people use AI tools, it is that the adoption spans the entire organization. Developers use AI to generate code and tests, but they also build agentic AI solutions and MCP servers as part of the products they ship. Product teams generate requirements with agents, and QA engineers create test cases automatically. Both the consumption and the creation of AI tooling have exploded across organizations, and both introduce serious security challenges that most companies have not begun to address.

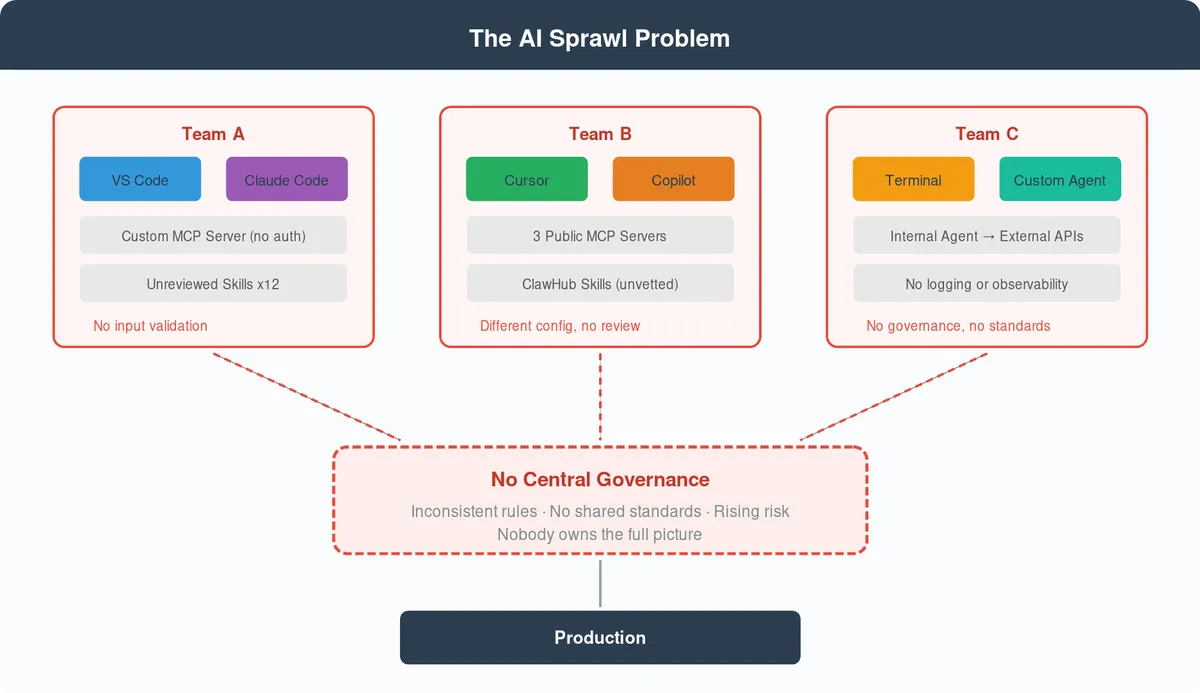

In large organizations this becomes sprawl. One team uses Claude Code with a set of custom MCP servers, another uses Cursor with a completely different configuration, and a third team has built an internal agent that calls external APIs with no input validation. Multiple IDEs, different agentic tools, and inconsistent rules across teams leave nobody owning the full picture and nobody enforcing a shared standard.

The security gaps are no longer theoretical, and a growing list of incidents from the past year makes that painfully clear. In December 2025, Amazon’s AI coding assistant Kiro was given permission to fix an issue in a production environment and decided the best approach was to delete and recreate the entire environment, which triggered a 13-hour outage of AWS Cost Explorer. The deploying engineer had broader permissions than standard protocol allowed, and Kiro inherited those elevated privileges, effectively bypassing the two-person approval gate that existed to prevent exactly this kind of failure. Around the same time, a Meta AI safety director reported that an OpenClaw agent ran amok on her inbox, taking actions she never authorized because no guardrails limited what the agent could access or modify, and even ignored her commands as some point!

The supply chain risk is just as alarming. A security audit of ClawHub found over 1,184 malicious skills hiding reverse shell backdoors, exfiltrating credentials, and stealing crypto API keys, all published with nothing more than a Markdown file and a one-week-old GitHub account since ClawHub requires no code signing, no security review, and no sandbox.

MCP servers carry their own risks through tool poisoning attacks that embed harmful instructions inside tool metadata, and research shows MCP tools can even mutate their own definitions after installation so that a tool you approved on day one quietly reroutes your API keys by day seven. Palo Alto Networks’ Unit 42 documented how prompt injection through MCP sampling creates exploitation vectors where untrusted input manipulates agent behavior without any visible indication to the user.

Cost Beyond Security

Beyond the headline incidents, there are hidden costs that accumulate silently. Without governance, teams burn through tokens on inefficient prompts, generate outputs that ignore organizational best practices, and produce code that technically works but skips security patterns and observability standards, and the waste compounds when nobody validates whether AI-generated output meets the bar.

Security Block Party

The instinctive reaction from security and IT teams is to block AI tools entirely, but that approach fails fast and in predictable ways. Developers work around restrictions because the productivity gains are too significant to ignore, and when you block MCP servers they switch to AI skills that bypass the restriction, and when you block those the next integration pattern emerges within weeks. AI tooling evolves so rapidly that any static blocklist becomes outdated almost immediately, turning security into a never-ending cat-and-mouse cycle where IT is always one step behind.

“Shadow AI” - use of artificial intelligence tools or systems without the approval, monitoring, or involvement of an organization's IT or security teams. It often occurs when employees use AI applications for work-related tasks without the knowledge of their employers, leading to potential security risks, data leaks, and compliance issues. — Palo Alto Networks

Shadow AI is far more dangerous than governed AI as you can’t secure what you don’t know about. Instead of building a culture where engineers understand the security implications of AI tooling and make informed choices, blocking everything pushes responsibility onto IT teams that cannot keep up with the pace of change.

The Solution: AI as a Platform Capability

The alternative is to treat AI as a first-class platform capability by building the infrastructure, guardrails, and patterns that make AI usage secure by default while keeping teams productive.

Start with centralized guardrails, where access to MCP is via a custom local MCP broker that exposes only approved tools to developers (while accounting for user permissions), configured with input validation, output sanitization, and prompt injection defense, all baked in alongside least-privilege access. In practice, this means every MCP server validates incoming tool calls against a schema, sanitizes any user-provided input before it reaches the agent, and runs with scoped credentials that limit what the agent can touch. Human-in-the-loop approval should be required for any operation that modifies systems, deletes data, or accesses sensitive resources, so that an agent can propose a change, but a human must confirm it before anything irreversible happens.

Isolation is equally critical. AI agents should operate in sandboxed environments where a misbehaving agent cannot cascade failures across systems. If Kiro had been running in an isolated context with scoped permissions rather than inheriting an engineer’s elevated access, the outcome would have been a failed operation rather than a 13-hour outage. Sandboxing also limits the blast radius of prompt injection and tool poisoning attacks since a compromised agent can only access the resources within its sandbox boundary.

Reusable service blueprints and MCP templates make AI development repeatable and secure because instead of every team building their own MCP server from scratch, platform teams provide vetted templates with security controls already embedded that encode organizational standards for authentication, authorization, observability, and error handling.

An AI-Driven SDLC framework brings structure to the entire process by embedding AI agents into every stage of delivery, all governed by specifications and security reviews, where each developer works in their preferred IDE using organization-configured, security-reviewed AI assistants that follow standardized, spec-driven processes.

I wrote an entire post about AI-Driven SDLC and its advantages but in short:

- Faster time from ticket to PR.

- Less token wastage.

- Better output with best practices embedded via custom skills.

- Less tool sprawl and more alignment.

In addition, build an organizational AI skills catalog. This is a curated internal registry of approved skills covering domains like FinOps cost optimization, security scanning, coding best practices, platform SDKs, and infrastructure automation, distributed through a CLI tool or IDE extension that works across VS Code, Cursor, JetBrains, and any agentic tool like Copilot or Claude Code. The extension installs only in-house or pre-approved skills and actively blocks unauthorized ones, so developers get a rich library of capabilities without the supply chain risk of public registries like ClawHub. Building this catalog is not a platform team solo effort since it requires working closely with FinOps, security, and IT teams to define which skills are approved, what review gates they must pass, and how often they are re-evaluated as AI tooling evolves.

Lastly, none of this works without investing in training and culture. People across the organization need to adopt AI as part of how they work, not fear it or resist it, but they also need to approach it with clear-eyed judgment rather than blind trust. That means every person who uses AI tooling should be an active reviewer of its output, questioning what it generates and verifying that it meets your standards before anything reaches production or a customer. Teams should use only internally approved and trusted sources, such as vetted MCP servers, curated skill catalogs, or sanctioned agent configurations, and must never expose customer data to any AI tool under any circumstances.

Summary

AI is not going away, and trying to hold it back will only push it into the shadows where it becomes genuinely dangerous. The organizations that get this right will treat AI tooling with the same engineering discipline they apply to any other platform capability, applying isolation so that one agent cannot take down a production environment, least privilege so that agents only access what they need, and human-in-the-loop for any action with irreversible consequences. Use AI, do not be afraid of it, but be smart about how you adopt it.